There is a subtle frustration many creators rarely articulate: having ideas for music without the technical means to express them. Traditional production tools assume fluency in software, structure, and sound design. In contrast, an AI Music Generator reframes the starting point entirely. Instead of asking users to construct music piece by piece, it allows them to begin with intent—phrases, moods, fragments of lyrics—and translate that into something audible. This shift is not just about convenience; it changes who gets to participate in music creation at all.

What initially looks like simplification is actually a redefinition of workflow. Rather than removing complexity, the system relocates it—from user input to model interpretation. The result is a creative environment where describing becomes composing.

Understanding How Language Maps Into Musical Structure

Why Text Inputs Carry More Information Than Expected

When users type a short phrase such as “melancholic piano with slow progression,” they are unknowingly embedding multiple layers of instruction:

- Emotional tone (melancholic)

- Instrumentation (piano)

- Tempo (slow)

- Structural pacing (progression)

In my testing, the system appears to parse these into internal musical parameters rather than treating them as vague suggestions. The output often reflects consistent tempo and tonal alignment with the original phrasing, suggesting a relatively stable mapping between language and sound.

How Semantic Interpretation Becomes Musical Decision Making

The model seems to convert text into:

- Scale selection (major vs minor)

- Rhythmic density

- Instrument layering

- Dynamic variation

This is not a one-to-one translation but a probabilistic interpretation. That explains why two generations with identical prompts may feel similar in mood but differ in structure.

Inside the Generative Engine and Model Behavior

Predictive Composition Instead of Assembly

Unlike traditional loop-based tools, the system does not appear to rely on prebuilt segments. Instead, it generates sequences continuously, similar to how language models predict the next word.

This applies to:

- Melody progression

- Chord transitions

- Rhythmic patterns

The continuity in generated tracks suggests that the model maintains internal coherence over time, rather than stitching fragments together.

Layer Synchronization Across Musical Components

A key observation is how different layers remain aligned:

- Vocals follow phrasing naturally

- Instrumentation supports emotional tone

- Transitions between sections feel intentional

This indicates multi-layer generation rather than independent track assembly.

Step-by-Step Creation Flow Based on Actual Usage

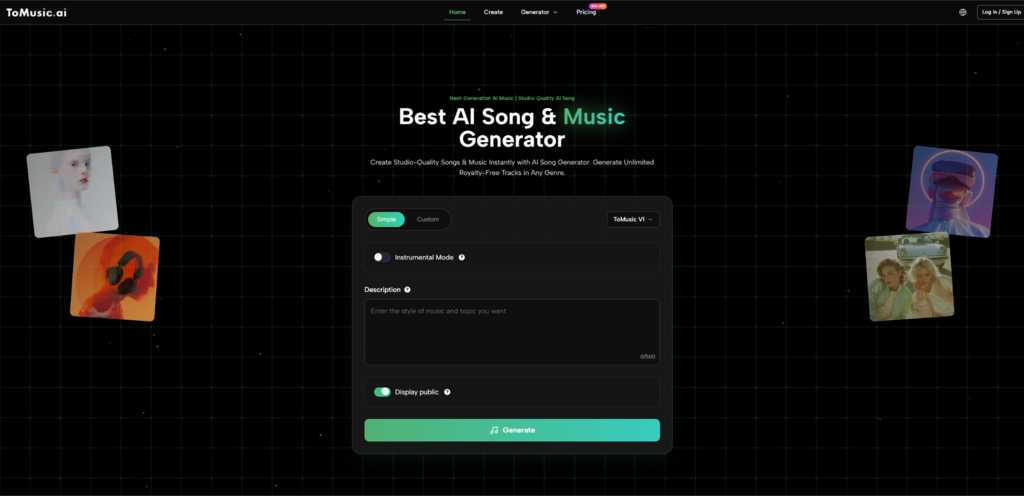

Step 1: Enter Text Description or Lyrics

Users begin by inputting either:

- A descriptive prompt

- Full or partial lyrics

The system accepts relatively flexible input formats without requiring structured syntax.

Step 2: Select Mode and Adjust Creative Direction

Two main approaches are available:

- A simplified mode that automates decisions

- A custom mode allowing control over style, vocals, and structure

This step significantly influences output consistency.

Step 3: Generate and Review Output

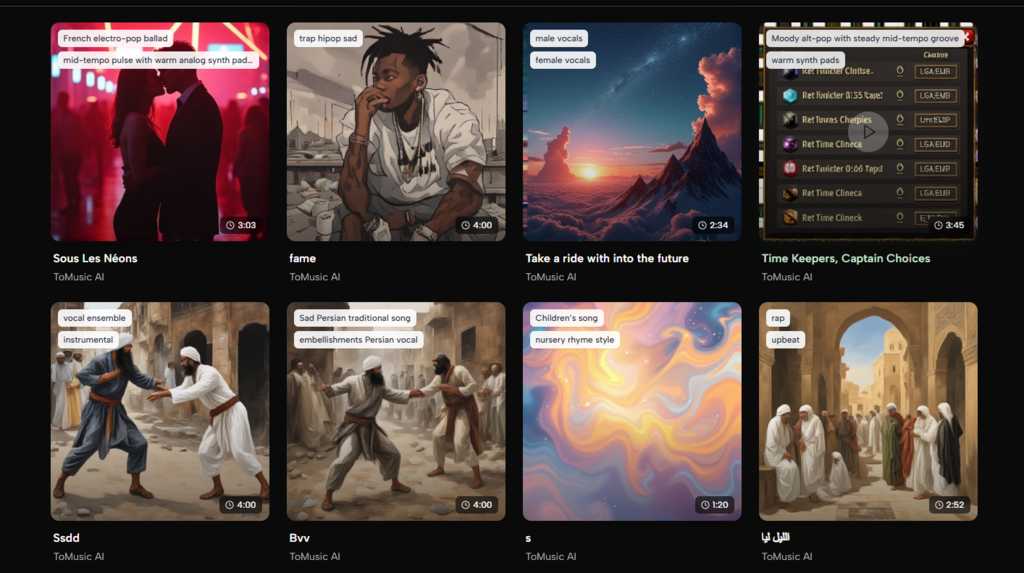

The system produces a complete track, including arrangement and optional vocals. Users can regenerate variations if the result does not align with expectations.

Comparing This Approach With Traditional Production Tools

| Aspect | Traditional Workflow | AI-Based Workflow |

| Input method | MIDI, DAW editing | Natural language |

| Skill requirement | High | Low to moderate |

| Iteration speed | Slow | Fast |

| Control precision | High | Medium |

| Accessibility | Limited | Broad |

The main difference lies not in capability, but in entry point. One begins with structure, the other with intention.

Where This Workflow Actually Fits in Practice

Content Creation and Short-Form Media

For creators producing frequent video content, the ability to generate music quickly reduces dependency on external libraries. The speed of iteration is particularly valuable when testing multiple creative directions.

Rapid Prototyping for Ideas

Writers or filmmakers can use generated tracks as placeholders. Even if not used in final production, they help define tone early in the process.

Personal Expression Without Technical Barriers

For users without formal training, this becomes a direct channel from thought to sound. The barrier shifts from “learning tools” to “articulating ideas.”

Where Limitations Still Appear in Real Use

Dependence on Prompt Clarity

Outputs vary significantly based on how precisely ideas are described. Vague prompts often lead to generic results.

Partial Loss of Fine Control

While overall structure is coherent, detailed adjustments—such as exact chord progression—remain difficult to specify.

Variation Between Generations

Even with identical inputs, results can differ. This unpredictability can be either a creative advantage or a limitation depending on context.

A Subtle Shift in Creative Ownership

The more interesting implication is not technical but conceptual. When composition becomes language-driven, authorship becomes more abstract. The creator is no longer arranging notes but defining intent.

This shift may feel unfamiliar at first, but it aligns with a broader pattern in creative tools: moving from manual construction toward guided generation.

Looking Ahead at Language-Driven Music Creation

If current behavior is any indication, future systems will likely improve in:

- Structural consistency across longer tracks

- Better alignment between lyrics and melody

- Greater controllability through refined prompts

However, the core idea will likely remain unchanged:

Music creation will continue moving closer to natural expression rather than technical execution.

The most notable change is not that machines can create music, but that humans can now create it differently.

Also Read: 4 Ways Reliable Internet is Crucial for Evolving RTO Policies